IoT 13: Make Dance Beats with Linux

Last Updated on May 12, 2022

First Published on March 22, 2022

In the last post, I said to make music using LV2 plugins with Linux. Yes, I've finished to make it, "Fog in The Warm". Until the last post, I sought the usage of musical tools with ARM (especially, Raspberry Pi) along with x86_64 = AMD64. In this post, I just use x86_64 only to seek the purpose to make music. When I get Raspberry Pi 4B or the latest one, I'll report the music play with ARM. I started to make music with the motivation, guitarists have to make music loops for their own plays.

Fog in The Warm

Recording Date: March 14, 2022. Composer, Programmer, and Editor: Kenta Ishii. Copyright © 2022 Kenta Ishii. All Rights Reserved.

HOORAY!

Now, I list links of this music:

YouTube (Music Video in My Channel)

IT'S DISTRIBUTED!

* To distribute, I use Repost by SoundCloud. This service also holds a community site, SCPlanner, for the "repost for repost" opportunity on SoundCloud and Spotify. If you want use the opportunity for Spotify, you would need to enable "Spotify for Artists" account, and register your playlist. Note that there is Apple Music for Artists and Spotify for Artists to update artist images in these services. Anyway, read "Distribution FAQs (Repost by SoundCloud)" in SoundCloud Help Center.

-------------------------------------------------------------------

Let's talk about the technology. I used Ubuntu 20.04 LTS on x86_64, applications are MusE, Carla, Hydrogen, QjackCtl, and other useful tools. I recommend that you use LMMS that can make your music just in the software if you don't have any curiosity to any kind of computer science (or haven't it not yet). Caution that if you are a student at a music school, you should learn LilyPond before making dance music. LilyPond can create MIDI output from your beautiful music sheets including guitar tabs.

1. MusE: Version 3.0.2

I used MusE as a MIDI sequencer. Note that MusE isn't MuseScore, and MusE and MuseScore don't have any relationship. You wouldn't find out tips of MusE online with searching using Google, and just read to the Wiki document to connect MIDI IN/OUT, putting MIDI IN through a track, record MIDI IN, and so on. There is a forum for MusE in LinuxMusicians. I use MusE as the JACK Master (Jack Transport timebase master) because of outputting MIDI notes to control plugins from MusE.

In the screen image above, You can watch track settings in "Port" and "Ch" columns for the output to Carla. To record MIDI IN, click a name in the "Track" column, select appropriate JACK device and channel for MIDI IN in the "Input routes" menu popped up by clicking the southeast-pointing green arrow on the upper side of the mixer window (just on the left side of the screen image), open the "Pianoroll" part window by making an actual range of part in a track with "pencil" and right clicking the range, click the red circle for recording and the MIDI jack icon (5-pin DIN) for enabling MIDI input in the part window, confirm "I (green for enabling input monitor)" and "R (red for enabling recording)" of the target track are lit in the main window, and start playing by clicking the right arrow in the part window. In this case, I connect jack-keyboard to jack-midi-0 (in) with channel No. 1. Tracks will finish playing on the bar number at "Len" on playing.

2. Carla: Version 2.4.2 (Build from Source)

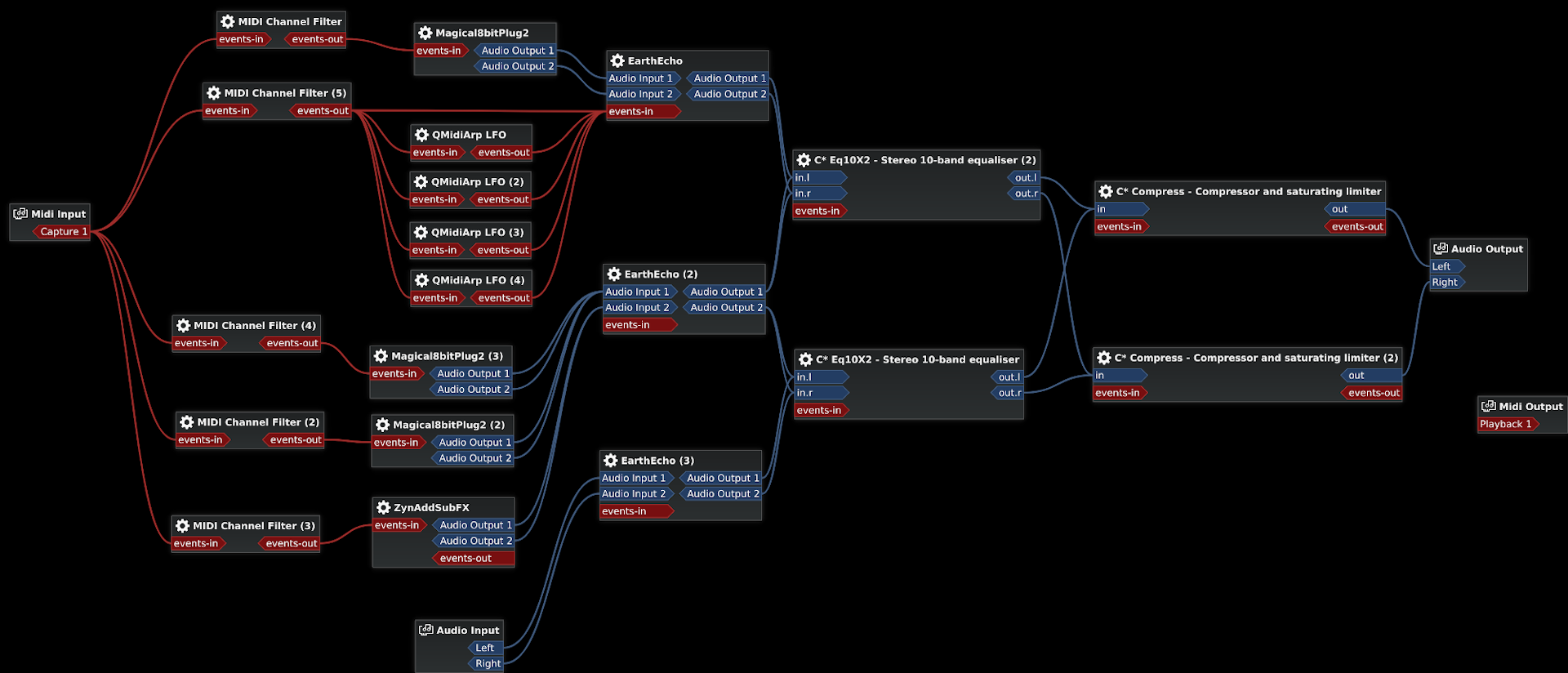

It's an audio plugin host. I use plugins with LV2 format and LADSPA format (C* Eq10X2 and C* Compress). I use several plugins in addition to plugins I introduced on the previous post. MIDI Channel Filter helps to route MIDI messages only to the path you selected. I use QMidiArp LFO to change parameter values for one of EarthEcho using MIDI mapping of the plugin host. QMidiArp LFO sends MIDI control change / continuous controller (CC) messages of a specific CC with sequential values for the time you demand. Besides, I also control the same CC as QMidiArp in MusE. MusE doesn't send out the CC message if the value of the CC isn't change in the track of MusE, and I use this feature to complement the sequence from QMidiArp LFO over playing.

The connection of plugins are drawn just in the image above taken from Carla.

3. Hydrogen: Version 1.0.0-rc1

It's a drum machine.

* You may concern about usage of Hydrogen with one-shot drum samples because of strict terms on YouTube Content ID. I recognize that one-shot drum samples never hold any copyright, i.e., these are not "possibly copyrighted" samples such as a short record of playing a drum set. See the discussion on a YouTube forum. In laws, copyright is defined as the term of creativity. The creativity isn't distinguished by the length of the time. For example, gray noise for a long time never have copyright. Whereas, a short expression using a musical instrument would have copyright even if it's a quarter of a second. I recommend that you upload your music video to YouTube for checking issues of copyrights before its publication widely.

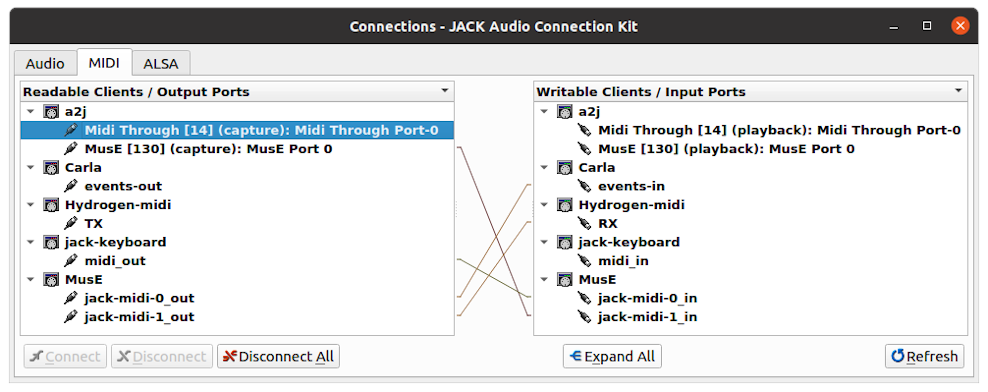

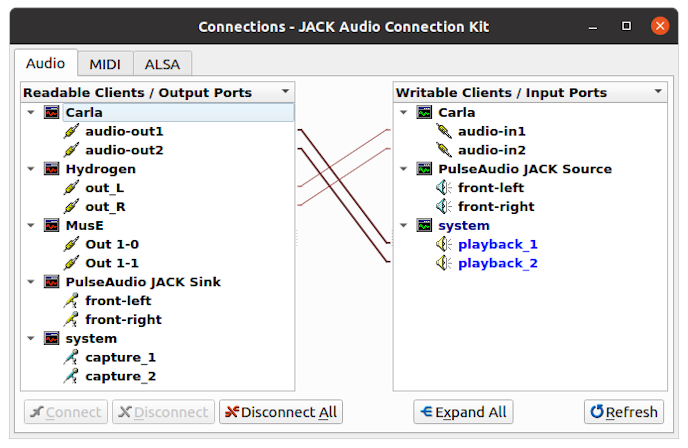

4. QjackCtl: Version 0.5.0

GUI of JACK Audio Connection Kit (JACK) sound server. In settings, I use 44100 for Sample Rate, 512 for Frames/Period, 2 for Periods/Buffer, i.e., processing 1024 samples at once with the latency at 23.2m seconds. 44100 is the standard sample rate for Compact Disk, and it can be uploaded to SoundCloud and YouTube. Note that SoundCloud recommends 48000Hz and 16-bit depth, and YouTube recommends 44100Hz and 24-bit depth in music videos (both assume stereo = 2 channels). For uploading, I made a WAV file to store linear PCM data with 44100HZ, 16-bit depth, and stereo. Your powerful system would reduce frames per period, but be careful not to count Xruns causing unhappy skipping of the track. Make sure to add "a2jmidid -e &" in "Execute script after Startup" on the "Options" tab in the "Setup" menu of QjackCtl to bridge between ALSA (system) MIDI to JACK MIDI.

You need to enable Jack Transport in MusE, Hydrogen, other DAWs, sequencers, etc. to synchronize these on JACK. I set MusE as the Jack Transport timebase master (in the "Midi Sync" menu of MusE). In Hydrogen, just turn on "J.TRANS" on the upper side of the window.

5. jack-keyboard: Version 2.7.2

Virtual Piano Keyboard. You would also use smart phone apps to output MIDI notes. The "midi_in" port of jack-keyboard is useful to attach any MIDI keyboard to the port.

6. aj-snapshot: Version 0.9.9 (Build from Source)

Saves and retrieves connections in JACK sound server.

7. jack-capture: Version 0.9.73

Records all plays on JACK. Make sure you won't watch counts of Xruns.

8. Shell Script

To start these applications at once, I made a shell script. In this script, the sleep command preserves the time for the previous command to open software that needs initializing for a while.

#!/bin/bash

qjackctl -s &

sleep 12

hydrogen -s fog_in_the_warm.h2song &

sleep 8

muse fog_in_the_warm.med &

sleep 8

carla fog_in_the_warm.carxp &

sleep 8

jack-keyboard &

sleep 12

aj-snapshot -drx fog_in_the_warm.xml

You can execute this script like:

cd ~/Desktop/fog_in_the_warm

./fog_in_the_warm.sh &

# Save connections in JACK

aj-snapshot fog_in_the_warm.xml

# Wait for Recording after Clicking "Start transport rolling" Button in QjackCtl.

jack_capture --port system:playback_1 --port system:playback_2 -jt fog_in_the_warm.wav

Make sure you save files on each applications. Otherwise, your making data will be vanished. It's one of cons against this system with multi-application. Several attempts are trying to integrate these applications including saving data and several status at once. I think it's the hard way because of the complexity of D-BUS with tunneling security measures.

9. Appendixes

If you want to record your guitar play you would use Audacity. If you consider another one, Ardour is good one. Ardour has no noise reduction, but Gtk Wave Cleaner helps excellent noise reduction. Note that Gtk Wave Cleaner is a software and monitor sound using ALSA, and you may need to select Jack sink (PulseAudio JACK Sink) for the output device of your system (in Ubuntu, "Settings" > "Sound" > "Output"). Ardour can chase JACK Transport by several settings. For example, "Session" > "Properties" > "Timecode" > "Turn off JACK Time Master", "Edit" > "Preferences" > "Transport" > "Chase" > "Show Transport Masters Window" > select "Jack", and "Transport" > enable "Use External Positional Sync Source" (at the latest development of version 7.0).

About installing, you can install these application packages via apt (at Ubuntu 20.04 LTS), or build from sources.

sudo apt install lilypond muse carla hydrogen qjackctl a2jmidid jack-keyboard aj-snapshot jack-capture ardour gwc

# Install Additonal Package for jack-keyboard

sudo apt install libcanberra-gtk-module

Make sure to join you to audio group in advance.

sudo gpasswd -a [username] audio

# Confirm You're in The Group

groups [username]

# Relogin

It's the memo of needed packages on building from sources. Caution that additional packages needed depending on your system. Note that aj-snapshot searches packages for dependencies in your system using "./configure", and Ardour searches packages for dependencies using "./waf configure" (see the instruction to build Ardour on Linux system).

# aj-snapshot: Get Sources from SourceForge

sudo apt install libmxml1 libmxml-dev

# Ardour (7)

sudo apt install libboost-all-dev libglibmm-2.4-dev libarchive-dev \

libtag1-dev vamp-plugin-sdk libvamp-sdk2v5 \

librubberband-dev libaubio-dev libcppunit-dev \

libcwiid-dev libwebsockets-dev libpangomm-1.4-dev \

liblrdf0-dev libsuil-dev libgtkmm-2.4-dev

To make the music video, I used Shotcut. Shotcut can be installed via snap but not apt. The filter, Audio Waveform Visualization, is useful.

sudo snap install shotcut --classic

Lastly, I make the image picture for the music by taking a snapshot of the music video made by myself using VLC media player ("Video" > "Take Snapshot" when pausing). The "vlc" package can be installed via both apt and snap.

If you want more information about music on Linux, visit LinuxMusicians. In addition to this, I opened a community, r/MusicProcutionLinux on Reddit. Asian Olympic series have been finished, and I expect I will become busy on something, but I'll try to reply in a week.